| Jul 21, 2022 |

AI-assisted analysis of three-dimensional galaxy distribution in our universe

|

|

(Nanowerk News) By applying a machine-learning technique, a neural network method, to gigantic amounts of simulation data about the formation of cosmic structures in the universe, a team of researchers has developed a very fast and highly efficient software program that can make theoretical predictions about structure formation. By comparing model predictions to actual observational datasets, the team succeeded in accurately measuring cosmological parameters, reports a study in Physical Review D ("Full-shape cosmology analysis of SDSS-III BOSS galaxy power spectrum using emulator-based halo model: a 5% determination of σ8").

|

|

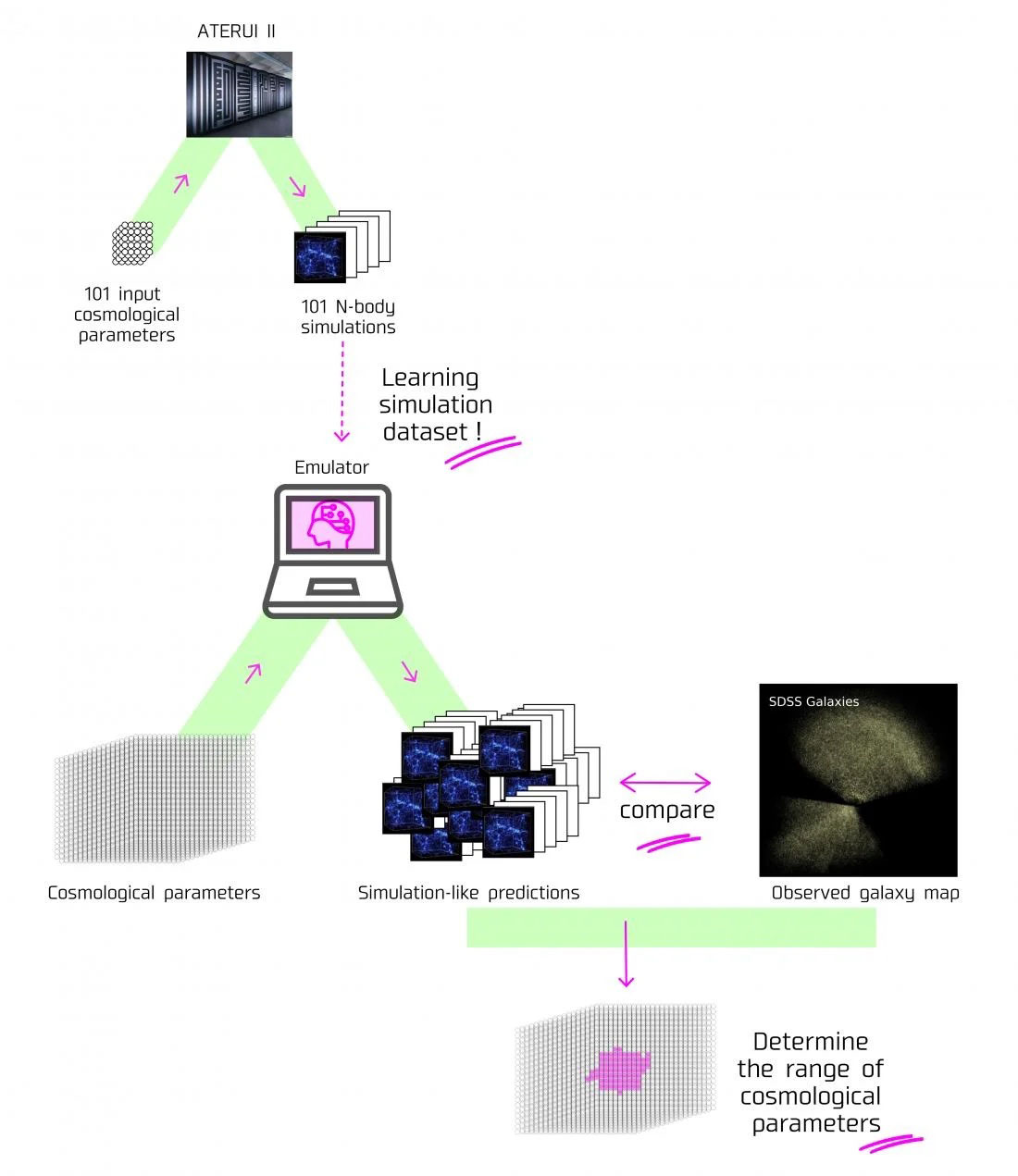

| Flow chart of how the emulator developed by the research team works. (Image: Kavli IPMU, NAOJ)

|

|

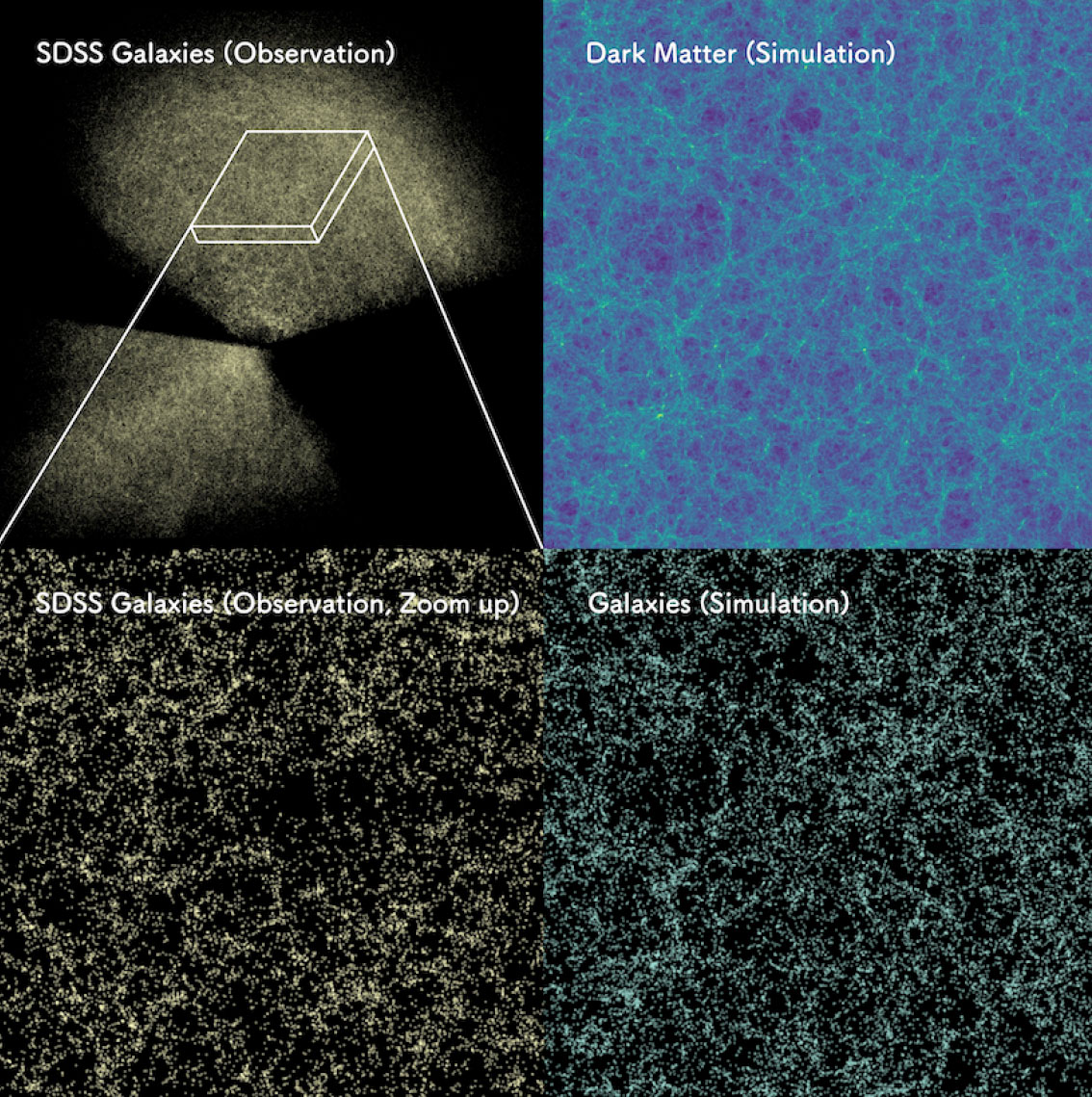

When the biggest galaxy survey to date in the world, the Sloan Digital Sky Survey (SDSS), created a three-dimensional map of the universe via the observed distribution of galaxies, it became clear that galaxies had certain characteristics. Some would clump together, or spread out in filaments, and in some places there were voids where no galaxies existed at all. All these show galaxies did not evolve in a uniform way, they formed as a result of their local environment.

|

|

In general, researchers agree this non-uniform distribution of galaxies is because of the effects of gravity caused by the distribution of “invisible” dark matter, the mysterious matter that no one has yet directly observed. By studying the data in the three-dimensional map of galaxies in detail, researchers could uncover the fundamental quantities such as the amount of dark matter in the universe.

|

|

In most recent years, N-body simulations have been widely used in studies recreating the formation of cosmic structures in the universe. These simulations mimic the initial inhomogeneities at high redshifts by a large number of N-body particles that effectively represent dark matter particles, and then simulate how dark matter distribution evolves over time, by computing gravitational pulling forces between particles in an expanding universe. However, the simulations are usually expensive, taking tens of hours to complete on a supercomputer, even for one cosmological model.

|

|

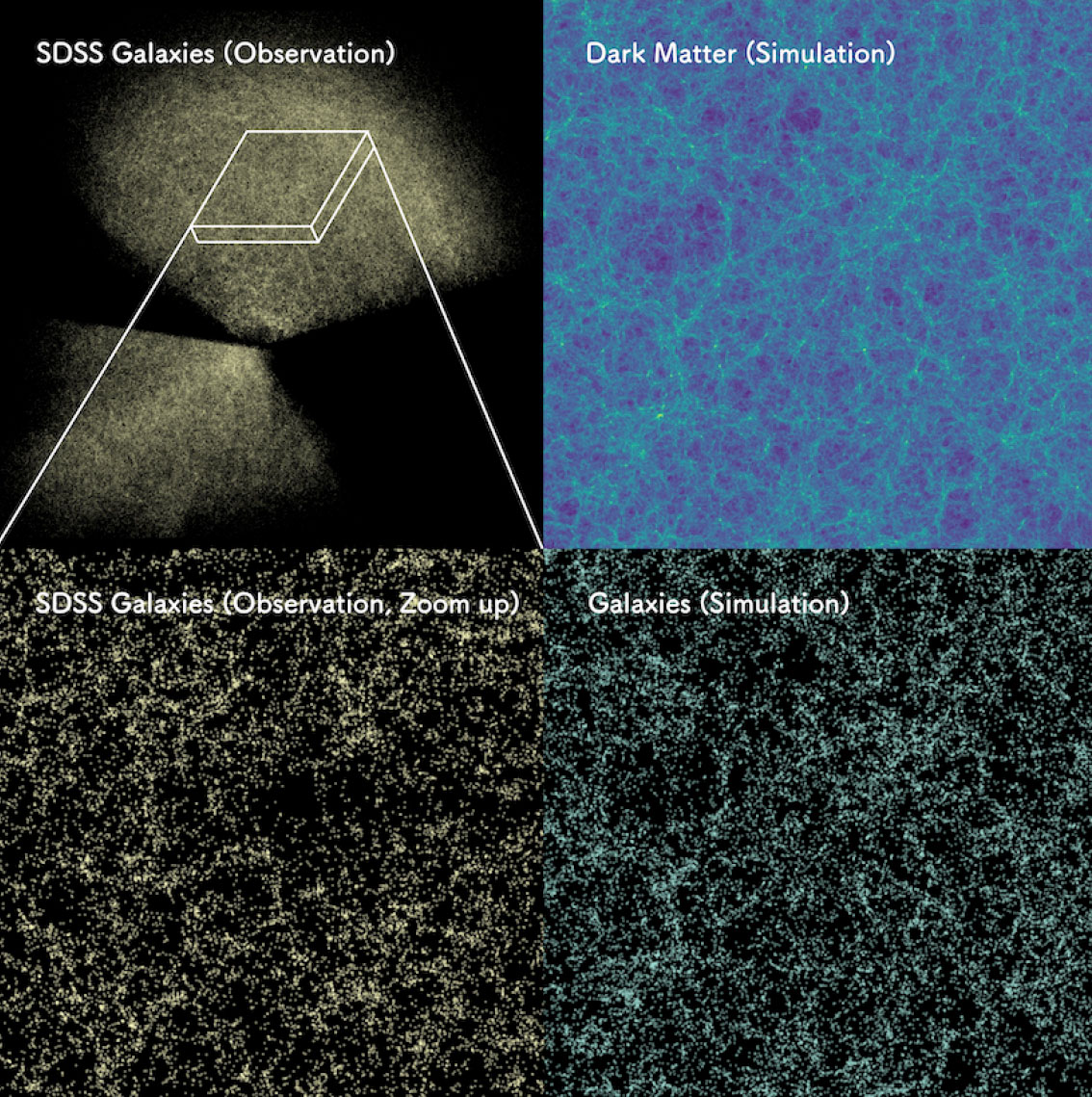

| Distribution of about 1 million galaxies observed by Sloan Digital Sky Survey (top left) and a zoom-in image of the thin rectangular region (bottom left). This can be compared to the distribution of invisible dark matter predicted by supercomputer simulation assuming the cosmological model that our AI derives (top right). The bottom right shows the distribution of mock galaxies that are formed in regions with high dark matter density. The predicted galaxy distribution shares the characteristic patterns such as galaxy clusters, filaments and voids seen in the actual SDSS data. (Image: Takahiro Nishimichi)

|

|

A team of researchers, led by former Kavli Institute for the Physics and Mathematics of the Universe (Kavli IPMU) Project Researcher Yosuke Kobayashi (currently Postdoctoral Research Associate at The University of Arizona), and including Kavli IPMU Professor Masahiro Takada and Kavli IPMU Visiting Scientists Takahiro Nishimichi and Hironao Miyatake, combined machine learning with numerical simulation data by the supercomputer “ATERUI II” at the National Astronomical Observatory of Japan (NAOJ) to generate theoretical calculations of the power spectrum, the most fundamental quantity measured from galaxy surveys which tells researchers statistically how galaxies are distributed in the universe.

|

|

Usually, several millions of N-body simulations would need to be run, but Kobayashi’s team were able to use machine learning to teach their program to calculate the power spectrum at the same level of accuracy as a simulation, even for a cosmological model for which the simulation had not yet been run. This technology is called an Emulator, and is already being used in computer science fields outside of astronomy.

|

|

"By combining machine learning with numerical simulations, which cost a lot, we have been able to analyze data from astronomical observations with high precision. These emulators have been used in cosmology studies before, but hardly anyone has been able to take into account the numerous other effects, which would compromise cosmological parameter results using real galaxy survey data. Our emulator does and has been able to analyze real observation data. This study has opened up a new frontier to large-scale structural data analysis," said the lead author Kobayashi.

|

|

However, to apply the emulator to actual galaxy survey data, the team had to take into account “galaxy bias” uncertainty, an uncertainty taking into consideration that researchers cannot accurately predict where galaxies form in the universe because of their complicated physics inherent in galaxy formation. To overcome this difficulty, the team focused on simulating the distribution of dark matter “halos”, where there is a high density of dark matter and high probability of galaxies forming. The team succeeded in making a flexible model prediction for a given cosmological model, by introducing a sufficient number of “nuisance” parameters to take into account the galaxy bias uncertainty.

|

|

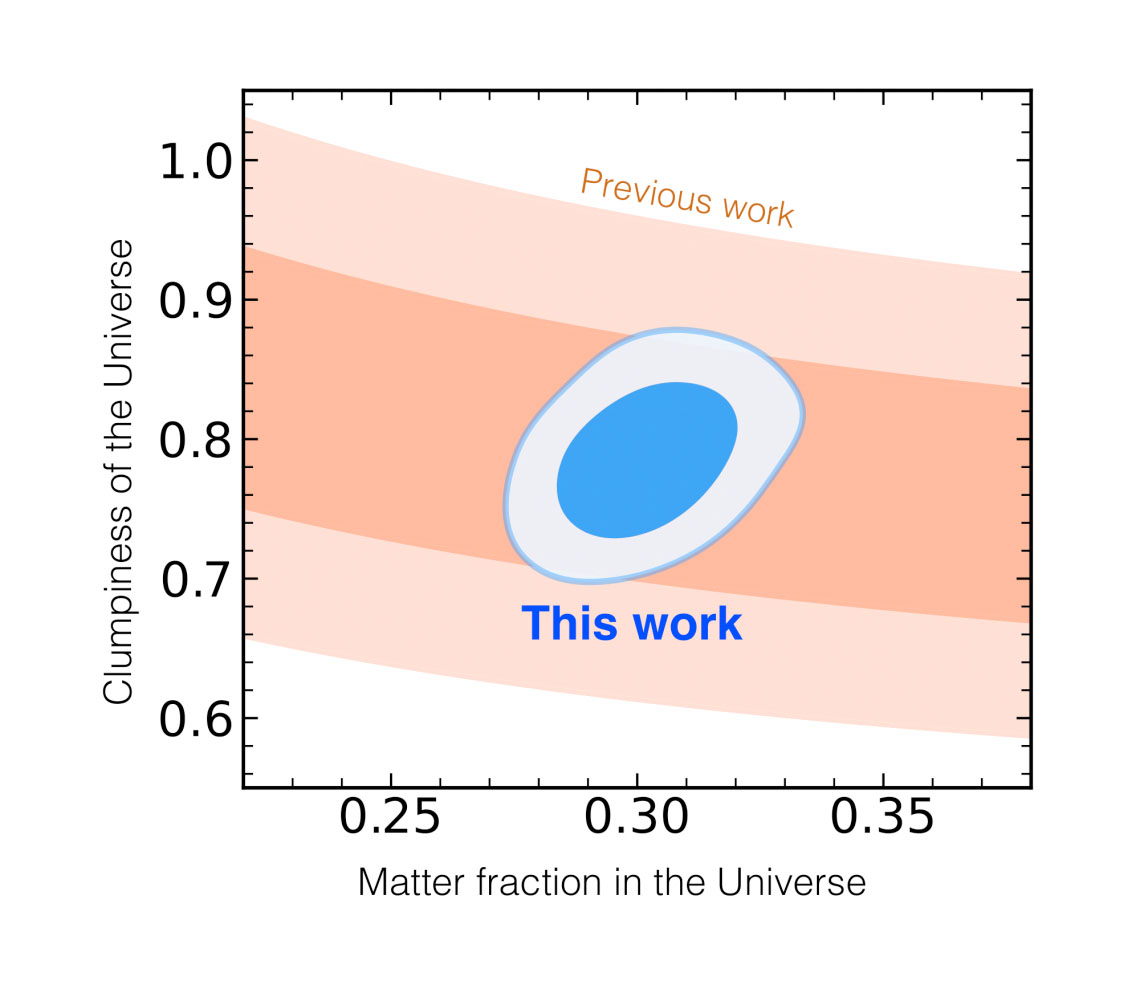

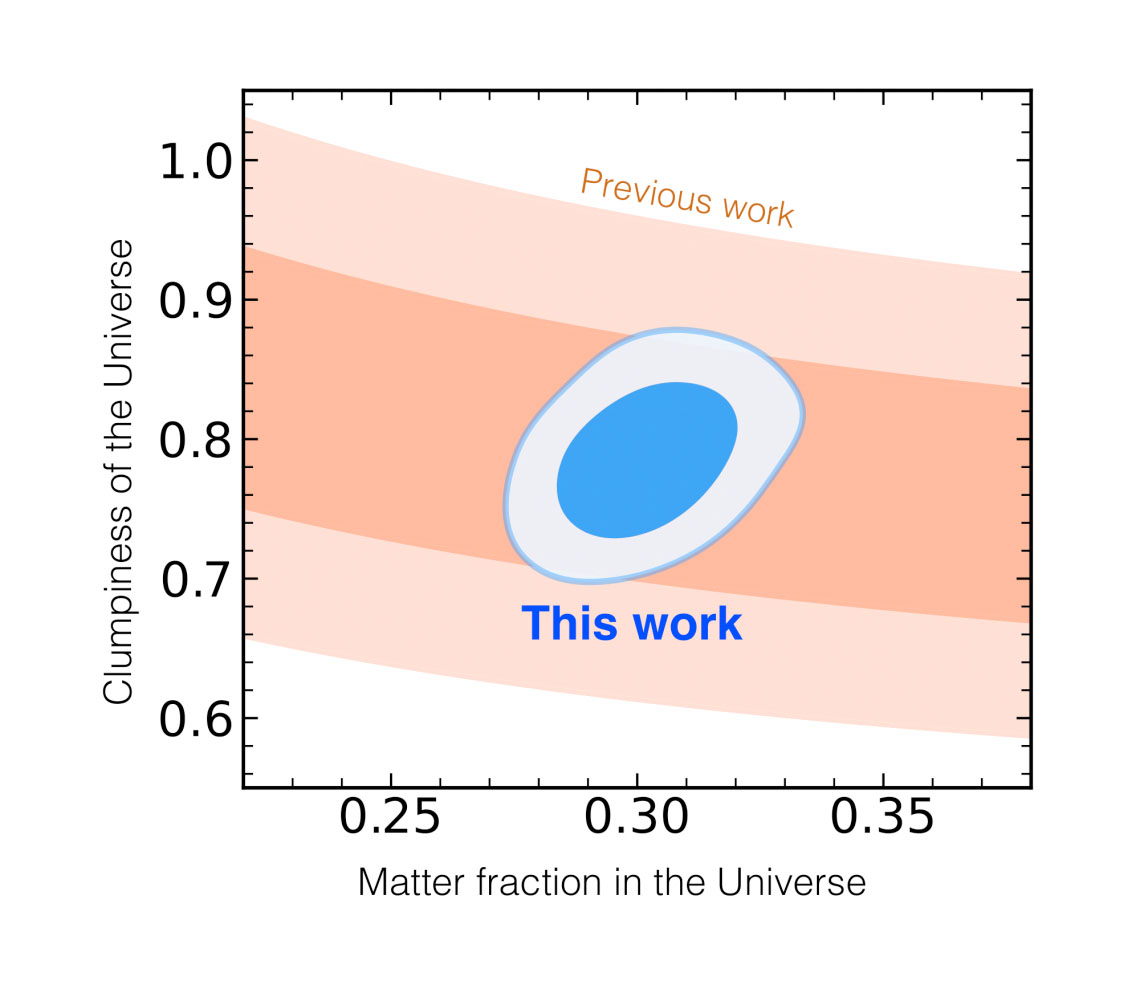

| A comparison of the Sloan Digital Sky Survey’s three-dimensional galaxy map and the results generated by the Emulator developed by Kobayashi et al. The x-axis shows the fraction of matter in the current universe, the y-axis shows the physical parameters corresponding to the clumpiness of the current universe (the bigger the number, the more galaxies exist in that universe). The light blue and dark blue bands correspond to 68% and 95% confidence, and inside this area shows the probability that there is a real value of the universe here. The orange band corresponds to results from the SSDS. (Image: Yosuke Kobayashi)

|

|

Then the team compared the model prediction to an actual SDSS data set, and successfully measured cosmological parameters to high precision. It confirms as an independent analysis that only about 30 per cent of all energy comes from matter (mainly dark matter), and that the remaining 70 per cent is the result of dark energy causing the accelerated expansion of the universe.

|

|

They also succeeded to measure the clumpiness of matter in our universe, while the conventional method used to analyze the galaxy 3D maps was not able to determine these two parameters simultaneously. The precision of their parameter measurement exceeds that obtained by the previous analyses of galaxy surveys. These results demonstrate the effectiveness of the emulator developed in this study.

|

|

The next step for the research team will be to continue to study dark matter mass and the nature of dark energy by applying their emulator to galaxy maps that will be captured by the Prime Focus Spectrograph, under development, led by the Kavli IPMU, to be mounted on NAOJ’s Subaru Telescope.

|