| Apr 28, 2020 | |

Light-driven thin-film robots that can feel |

|

| (Nanowerk Spotlight) Living organisms have inspired research of soft robotics that mimic the complex motion of animals and plants. However, current soft robots have limited or no sensory capabilities, which hinder their development toward artificial intelligent robots that could feel. | |

| The grand challenge lies in achieving highly integrated actuation and sensing mechanisms, which becomes even more difficult when the robot size is small-scale, down to centimeters. | |

| Now, a team of researchers from the Ho Research Group at National University of Singapore, have developed a light-driven robot that compactly integrates a strain sensor, a temperature sensor and an actuator into a thin film with a thickness of ∼115 µm. | |

|

|

| Figure 1. Mini kirigami somatosensory light-driven thin-film robots. The scale bar is 1 cm. (Image: Ho Research Group, National University of Singapore) | |

| This somatosensory light-driven robot (SLiR), inspired by living organisms, can simultaneously sense strain and temperature. It confers soft robots with complex perceptions of their body status, as well as the surrounding environments. | |

| "We developed an integral thin-film construct that includes a non-interfering piezoresistive strain and pyroelectric temperature sensor to enable simultaneous reflex-like and locomotive capabilities," says Dr. Ho Ghim Wei, an associate professor of Electrical and Computer Engineering at National University of Singapore. "This allows the fabrication of customizable miniature soft robots capable of simultaneous perception and motility." | |

| Ho's team recently reported their findings in Advanced Materials ("Somatosensory, Light-Driven, Thin-Film Robots Capable of Integrated Perception and Motility"). | |

|

|

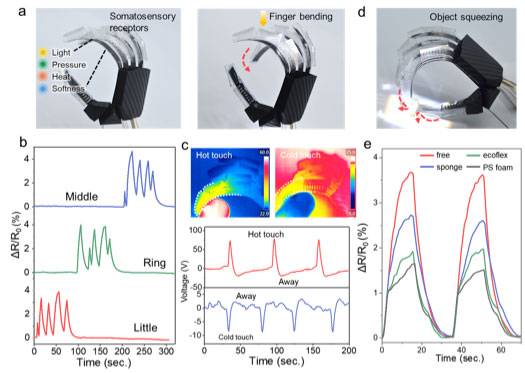

| Figure 2. somatosensory light-driven robot (SLiR) anthropomorphic hand. a) SLiR hand (left) and bending of its middle finger (right). b) Resistance response to each finger movements. c) Voltage response to index finger touching and moving away from a hot/cold object d) SLiR hand squeezing a sponge. e) Resistance response of free pinching motion and squeezing objects with different softness. (Image: Ho Research Group, National University of Singapore) | |

| "Our flexible and monolithic thin-film composite allows arbitrary patterning of sensors and actuators, and can be transformed into diverse 2D to 3D prototypes through the kirigami," notes Dr. Xiao-Qiao Wang, the paper's first author. "The dimensions of the robot can be easily downsized." | |

| Wang's work has been focussed on designing several kirigami soft robot prototypes capable of proprioceptive and exteroceptive feedback. One of these prototypes is a robotic walker that provides feedback on its detailed locomotive gaits as well as subtle terrain textures when it threads across different surface topographies. | |

| Another prototype is an anthropomorphic hand that possesses somatosensory receptions, displaying specific finger movements, hot/cold sensation, and hardness/softness perception. | |

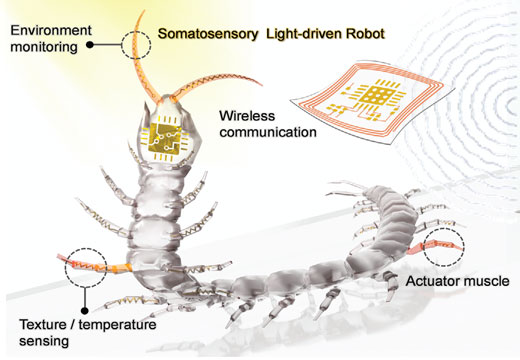

| Yet another design is an untethered centipede that can walk, turn, and wirelessly sense light intensity, wind speed and human touch. | |

|

|

| Figure 3. Untethered SLiR centipede with directional locomotion and multitude of sensors. (Image: Ho Research Group, National University of Singapore) | |

| "SLiRs could be used for active human-robot interaction, environmental data collection, and closed-loop control of actuation and sensing system," Ho concludes. "Our design principle and fabrication offer readily manufacturable autonomous soft-bodied systems, a step closer to mimicking the intelligence of natural organisms." | |

|

By Xiao-Qiao Wang, Ghim Wei Ho, Department of Electrical and Computer Engineering, National University of Singapore

|

|

|

Become a Spotlight guest author! Join our large and growing group of guest contributors. Have you just published a scientific paper or have other exciting developments to share with the nanotechnology community? Here is how to publish on nanowerk.com. |

|