| Posted: Mar 07, 2011 | |

Microfluidic, label-free, high-throughput nanoparticle analyzer |

|

| (Nanowerk Spotlight) Currently, the most common methods for sizing nanoparticles extract data from bulk measurements. These techniques are inherently averaging and so are unable to effectively resolve mixtures of different-sized particles. While individual nanoparticles can be sized using electron microscopy, this approach is time-consuming and of little utility in assembling significant population statistics. | |

| Researchers have now developed a microfluidic device for the all-electronic analysis of complex suspensions of nanoparticles in fluid. This device is capable of detecting and sizing individual and unlabeled particles as small as a few tens of nanometers in diameter at rates estimated to exceed 100,000 particles per second. | |

| In addition to the high detection rates afforded by the device, two important features of the analyzer are its excellent size resolution and its ability to measure the absolute concentration of the particles in solution. These new capabilities were previously unavailable, and their development represents a significant advance in the field of nanoparticle analysis. | |

| "Our device design enables the detection of individual nanoparticles with good signal-to-noise ratio at high count rates," Jean-Luc Fraikin, the first author of the paper and now a postdoctoral associate in the Marth Lab at the Center for Nanomedicine at Sanford-Burnham Medical Research Institute at UC Santa Barbara, tells Nanowerk. "The low-cost, scalable fabrication method, as well as the simple readout electronics, make this analyzer potentially useful in a wide range of applications." | |

| In their paper in Nature Nanotechnology ("A high-throughput label-free nanoparticle analyzer"), the team, led by Andrew Cleland, describes how they used their analyzer for the rapid (seconds) characterization of a complex mixture of nanoparticles of different sizes, and to detect bacterial viruses in both salt solution and in mouse blood plasma. | |

| "Further, when we analyzed the distribution of nanoparticles in the native blood plasma we found an unexpected result" says Fraikin: "The concentration of nanoparticles in the blood exhibited a power-law dependence on size, with increasing concentration as the particle size decreased. The reason for this size dependence remains unexplained." | |

|

|

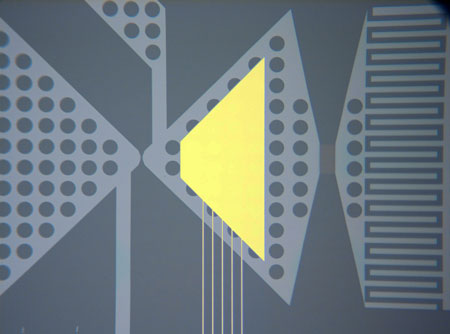

| Optical micrograph of the nanoparticle analyzer. (Image courtesy of J.-L. Fraikin & A.N. Cleland/ UC Santa Barbara) | |

| This novel nanoparticle analyzer combines a simply fabricated microfluidic design with a high-throughput electrical readout. Fraikin explains that the analyzer has two components: a microfluidic channel, which directs the pressure-driven flow of analyte through the electrical sensor, carefully designed to maximize measurement bandwidth, and the sensor itself, comprising two voltage-bias electrodes and a single, optically lithographed readout electrode embedded in the microchannel. | |

| "Together, these components form a fluidic voltage divider that yields wide-bandwidth electrical detection of particles as they pass through the nanoconstriction," explains Fraikin. "The sensing electrode is embedded in the channel between the fluidic resistor and the nanoconstriction. As a particle enters the nanoconstriction, it alters the ionic current and, because of the voltage division between the fluidic resistor and the nanoconstriction, changes the electrical potential of the fluid in contact with the sensing electrode." | |

| The analysis chips are simply fabricated at low cost, using single-layer optical lithography for electrode patterning, and well-established micromoulding techniques for defining the fluid channel that permit many chips to be made from a single mould. | |

| This system is highly sensitive, with an ability to distinguish particles with diameters differing by only a few percent. | |

| The team's initial motivation for this research arose through conversations with biologists about previous work in Cleland's lab, in which they developed a high-throughput electronic detector for larger microparticles and cells ("High-bandwidth radio frequency Coulter counter"). | |

| "Our collaborators informed us of the significant need for similar technologies for particle analysis on the nanoscale for studying blood and other potential clinical applications, and we set to work" recounts Fraikin. "Only once we became more deeply involved in the project did we begin to appreciate the broader context for this work, given the range of applications for which nanoparticles in this size range are being developed, and the lack of practical sizing technologies that were available." | |

| The researchers hope to see this analysis technology used in a variety of applications. Nanoparticles are being developed for use in a broad range of industries, including the alternative energy sector (in nanophotovoltaics and supercapacitors) and medicine (in drug delivery and imaging applications). This novel nanoparticle analyzer could directly be used for quality analysis and quality control of nanoparticle synthesis in any one of these sectors. | |

| Fraikin points out that applications in more fundamental research areas exist as well. "As a specific example, consider studies that use synthetic nanoparticles and genetically modified viruses to understand the biomolecular mechanisms of cancer ("Coadministration of a Tumor-Penetrating Peptide Enhances the Efficacy of Cancer Drugs"). In many of these studies, the concentration of nanoparticles in a fluid sample forms the metric for interpreting the experimental results. In our paper, we showed that our analyzer could be used in precisely this application, with great improvements in simplicity and the time required for analysis." | |

| The rapid development of so many new types of nanoparticles and nanoparticle applications entails a great need for tools capable of the physical characterization of these particles. While the nanoparticle analyzer discussed here measures two very basic physical properties of nanoparticles – their size and concentration in solution – many other characteristics of nanoparticles, such as surface charge, surface roughness, detailed particle shape and electric or magnetic polarizability are critical to their applications and will require tools for the high-throughput measurement these properties as well. | |

| Seems like the team has their work cut out for them. | |

By

Michael

Berger

– Michael is author of three books by the Royal Society of Chemistry:

Nano-Society: Pushing the Boundaries of Technology,

Nanotechnology: The Future is Tiny, and

Nanoengineering: The Skills and Tools Making Technology Invisible

Copyright ©

Nanowerk LLC

By

Michael

Berger

– Michael is author of three books by the Royal Society of Chemistry:

Nano-Society: Pushing the Boundaries of Technology,

Nanotechnology: The Future is Tiny, and

Nanoengineering: The Skills and Tools Making Technology Invisible

Copyright ©

Nanowerk LLC

|

|

|

Become a Spotlight guest author! Join our large and growing group of guest contributors. Have you just published a scientific paper or have other exciting developments to share with the nanotechnology community? Here is how to publish on nanowerk.com. |

|