Showing Spotlights 1673 - 1680 of 2786 in category All (newest first):

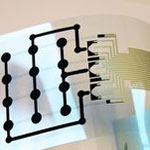

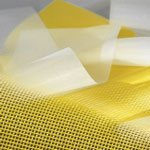

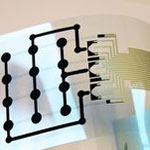

Printed electronics is one of the most important new enabling technologies. It will have a major impact on most business activities from publishing and security printing to healthcare, automotive, military and consumer packaged goods sectors. With recent advances, power and energy storage can be integrated into the printing process, making their potential applications even more ubiquitous. Currently, though, the more complex printed components that require a combination of different class of devices, still experience drawbacks in performance, cost, and large-scale manufacturability. Researchers have now succeeded in fabricating a multi-component sensor array by simple printing techniques - all components (polymer sensor array, organic transistors, electrochromic display) are integrated on the same flexible substrate.

Printed electronics is one of the most important new enabling technologies. It will have a major impact on most business activities from publishing and security printing to healthcare, automotive, military and consumer packaged goods sectors. With recent advances, power and energy storage can be integrated into the printing process, making their potential applications even more ubiquitous. Currently, though, the more complex printed components that require a combination of different class of devices, still experience drawbacks in performance, cost, and large-scale manufacturability. Researchers have now succeeded in fabricating a multi-component sensor array by simple printing techniques - all components (polymer sensor array, organic transistors, electrochromic display) are integrated on the same flexible substrate.

Apr 13th, 2011

Life as we know it is dominated by friction, the interaction between moving objects. Friction controls our everyday lives, from letting us walk to work, to holding a cup of tea. Friction forces act wherever two solids touch. Although friction has been investigated for hundreds of years - in the 15th century, Leonardo da Vinci was the first to enunciate two laws of friction - it is surprisingly difficult to examine how friction works at the nanoscale level due to the sheer difficulty of bringing nanoscale objects into contact and imaging them at the same time. Researchers have now demonstrated the ability to bring nanoscale objects together, rub them repeatedly across one another and see how friction changes nanosized materials in real time.

Life as we know it is dominated by friction, the interaction between moving objects. Friction controls our everyday lives, from letting us walk to work, to holding a cup of tea. Friction forces act wherever two solids touch. Although friction has been investigated for hundreds of years - in the 15th century, Leonardo da Vinci was the first to enunciate two laws of friction - it is surprisingly difficult to examine how friction works at the nanoscale level due to the sheer difficulty of bringing nanoscale objects into contact and imaging them at the same time. Researchers have now demonstrated the ability to bring nanoscale objects together, rub them repeatedly across one another and see how friction changes nanosized materials in real time.

Apr 12th, 2011

Life cycle assessment is an essential tool for ensuring the safe, responsible, and sustainable commercialization of a new technology. With missing data about the large scale impact of nanotechnology, life cycle assessments of potential nanoproducts should form an integral part of nanotechnology research at early stages of decision making as it can help in the screening of different process alternatives. Part of any meaningful results from a life cycle assessment is the total quantity of the material under investigation. Especially exposure assessments often begin with estimates based on total amounts of a material produced with the assumption that some fraction of the material in question will ultimately released to the environment. As it turns out, nobody - no research institution, no government agency, no industry association - knows even vaguely how much nanomaterials are manufactured today.

Life cycle assessment is an essential tool for ensuring the safe, responsible, and sustainable commercialization of a new technology. With missing data about the large scale impact of nanotechnology, life cycle assessments of potential nanoproducts should form an integral part of nanotechnology research at early stages of decision making as it can help in the screening of different process alternatives. Part of any meaningful results from a life cycle assessment is the total quantity of the material under investigation. Especially exposure assessments often begin with estimates based on total amounts of a material produced with the assumption that some fraction of the material in question will ultimately released to the environment. As it turns out, nobody - no research institution, no government agency, no industry association - knows even vaguely how much nanomaterials are manufactured today.

Apr 11th, 2011

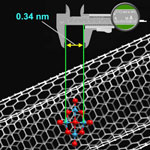

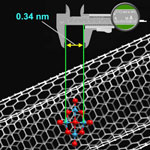

Metrology is the science of measurements, and nanometrology is that part of metrology that relates to measurements at the nanoscale. Many governments worldwide have existing nanotechnology policies and are taking the preliminary steps towards nanometrology strategies, for example in support of pre-normative R+D and standardization work. In this Nanowerk Spotlight, we look at the European Commission funded project Co-Nanomet as an example of the importance of nanometrology as a key enabling technology for quality control at the nanoscale. While a first and obvious benefit of metrology is its potential to improve scientific understanding, a second, equally important, but less obvious benefit of metrology is closely linked to the concepts of quality control or conformity assessment, which means making a decision about whether a product or service conforms to specifications.

Metrology is the science of measurements, and nanometrology is that part of metrology that relates to measurements at the nanoscale. Many governments worldwide have existing nanotechnology policies and are taking the preliminary steps towards nanometrology strategies, for example in support of pre-normative R+D and standardization work. In this Nanowerk Spotlight, we look at the European Commission funded project Co-Nanomet as an example of the importance of nanometrology as a key enabling technology for quality control at the nanoscale. While a first and obvious benefit of metrology is its potential to improve scientific understanding, a second, equally important, but less obvious benefit of metrology is closely linked to the concepts of quality control or conformity assessment, which means making a decision about whether a product or service conforms to specifications.

Apr 8th, 2011

Many strategies to develop stretchable electronics rely on engineering new constructs from existing materials, e.g. ultrathin, stretchable silicon structures. Another approach is to fabricate ultrathin CMOS circuits on stretchable materials such as polymers. Nanotechnology allows a novel route to materials and structures that can be used to develop human-friendly devices with realistic functions and abilities that would not be feasible by mere extension of conventional technology. New research suggests devices that can act as part of human skin or clothing, and can therefore be used ubiquitously. Such devices could eventually find a wide range of applications in recreation, virtual reality, robotics and health care.

Many strategies to develop stretchable electronics rely on engineering new constructs from existing materials, e.g. ultrathin, stretchable silicon structures. Another approach is to fabricate ultrathin CMOS circuits on stretchable materials such as polymers. Nanotechnology allows a novel route to materials and structures that can be used to develop human-friendly devices with realistic functions and abilities that would not be feasible by mere extension of conventional technology. New research suggests devices that can act as part of human skin or clothing, and can therefore be used ubiquitously. Such devices could eventually find a wide range of applications in recreation, virtual reality, robotics and health care.

Apr 6th, 2011

A few years ago, researchers determined that the stiffness of cancer cells affects the way they spread. When cancer is becoming metastatic, or invading other organs, the diseased cells must travel throughout the body. Because the cells need to enter the bloodstream and maneuver through tight anatomical spaces, cancer cells are much more flexible, or softer, than normal cells. With this knowledge, researchers wanted to understand the cell mechanics associated with the anticancer treatment of cells from patient samples; in particular they were interested in reporting the effects of green tea extract due to the fact that is was a natural product, it has know anti-cancer effects and it is widely consumed in beverage form around the world.

A few years ago, researchers determined that the stiffness of cancer cells affects the way they spread. When cancer is becoming metastatic, or invading other organs, the diseased cells must travel throughout the body. Because the cells need to enter the bloodstream and maneuver through tight anatomical spaces, cancer cells are much more flexible, or softer, than normal cells. With this knowledge, researchers wanted to understand the cell mechanics associated with the anticancer treatment of cells from patient samples; in particular they were interested in reporting the effects of green tea extract due to the fact that is was a natural product, it has know anti-cancer effects and it is widely consumed in beverage form around the world.

Apr 5th, 2011

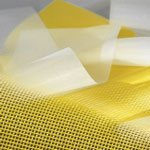

In order to find replacement materials for ITO, scientists have been working with carbon nanotubes, graphene, and other nanoscale materials such as nanowires. While many of these approaches work fine in the lab, upscaleability usually has been an issue. Researchers at Empa, the Swiss Federal Laboratories for Material Science and Technology, have now demonstrated another solution: they presented a transparent and flexible electrode based on a precision fabric with metal and polymer fibers woven into a mesh. The team demonstrated organic solar cells fabricated on their flexible precision fabrics as well as on conventional glass/ITO substrates and found very similar performance characteristics.

In order to find replacement materials for ITO, scientists have been working with carbon nanotubes, graphene, and other nanoscale materials such as nanowires. While many of these approaches work fine in the lab, upscaleability usually has been an issue. Researchers at Empa, the Swiss Federal Laboratories for Material Science and Technology, have now demonstrated another solution: they presented a transparent and flexible electrode based on a precision fabric with metal and polymer fibers woven into a mesh. The team demonstrated organic solar cells fabricated on their flexible precision fabrics as well as on conventional glass/ITO substrates and found very similar performance characteristics.

Apr 4th, 2011

It started innocently enough with isolated instances of smoke coming out of computers. Then networks crashed. Now, programs are malfunctioning on a large scale, shutting down the Vatican's huge computer infrastructure which it depends on to manage its billions upon billions of investment dollars, real estate portfolios, and art collections. It is difficult to obtain all the details, but it appears that some form of nanotechnology got out of control. Surprisingly, and against its deeply ingrained reflexes of total openness and transparency, the Vatican initially tried to cover the whole thing up. Until a tabloid reporter got wind of what had happened and the whole thing became public with an article today (April 1) in an Italian tabloid that had this sensation-seeking headline splashed all over the front page: "Gay nanobots ballano Bunga-Bunga in Vaticano" - Gay nanobots dance Bunga-Bunga in the Vatican.

It started innocently enough with isolated instances of smoke coming out of computers. Then networks crashed. Now, programs are malfunctioning on a large scale, shutting down the Vatican's huge computer infrastructure which it depends on to manage its billions upon billions of investment dollars, real estate portfolios, and art collections. It is difficult to obtain all the details, but it appears that some form of nanotechnology got out of control. Surprisingly, and against its deeply ingrained reflexes of total openness and transparency, the Vatican initially tried to cover the whole thing up. Until a tabloid reporter got wind of what had happened and the whole thing became public with an article today (April 1) in an Italian tabloid that had this sensation-seeking headline splashed all over the front page: "Gay nanobots ballano Bunga-Bunga in Vaticano" - Gay nanobots dance Bunga-Bunga in the Vatican.

Apr 1st, 2011

Printed electronics is one of the most important new enabling technologies. It will have a major impact on most business activities from publishing and security printing to healthcare, automotive, military and consumer packaged goods sectors. With recent advances, power and energy storage can be integrated into the printing process, making their potential applications even more ubiquitous. Currently, though, the more complex printed components that require a combination of different class of devices, still experience drawbacks in performance, cost, and large-scale manufacturability. Researchers have now succeeded in fabricating a multi-component sensor array by simple printing techniques - all components (polymer sensor array, organic transistors, electrochromic display) are integrated on the same flexible substrate.

Printed electronics is one of the most important new enabling technologies. It will have a major impact on most business activities from publishing and security printing to healthcare, automotive, military and consumer packaged goods sectors. With recent advances, power and energy storage can be integrated into the printing process, making their potential applications even more ubiquitous. Currently, though, the more complex printed components that require a combination of different class of devices, still experience drawbacks in performance, cost, and large-scale manufacturability. Researchers have now succeeded in fabricating a multi-component sensor array by simple printing techniques - all components (polymer sensor array, organic transistors, electrochromic display) are integrated on the same flexible substrate.

Subscribe to our Nanotechnology Spotlight feed

Subscribe to our Nanotechnology Spotlight feed