Showing Spotlights 1329 - 1336 of 2790 in category All (newest first):

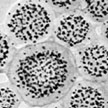

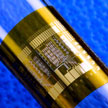

One major challenge in contemporary science is to accomplish with synthetic building blocks what nature does so well, that is, creating complex and functional structures through multiple levels of assembly of biomolecules. Bottom-up engineering of nanostructures that assemble themselves from polymer molecules are bound to become useful tools in chemistry. To that end, researchers are using block copolymer based micellar architectures to form hierarchical superstructures with defined shape and geometry. Researchers have now demonstrate that nanoparticles tethered with block copolymers resemble micelles that can assemble into well-ordered higher level mesostructures.

One major challenge in contemporary science is to accomplish with synthetic building blocks what nature does so well, that is, creating complex and functional structures through multiple levels of assembly of biomolecules. Bottom-up engineering of nanostructures that assemble themselves from polymer molecules are bound to become useful tools in chemistry. To that end, researchers are using block copolymer based micellar architectures to form hierarchical superstructures with defined shape and geometry. Researchers have now demonstrate that nanoparticles tethered with block copolymers resemble micelles that can assemble into well-ordered higher level mesostructures.

Jun 12th, 2013

Atomically precise manufacturing (APM) can be understood through physics, engineering design principles, proof-of-concept examples, computational modeling, and parallels with familiar technologies. APM is a prospective production technology based on guiding the motion of reactive molecules to build progressively larger components and systems. Bottom-up atomic precision can enable production with unprecedented scope (in terms of product materials, components, systems, and performance), while fundamental mechanical scaling laws can enable unprecedented productivity.

Atomically precise manufacturing (APM) can be understood through physics, engineering design principles, proof-of-concept examples, computational modeling, and parallels with familiar technologies. APM is a prospective production technology based on guiding the motion of reactive molecules to build progressively larger components and systems. Bottom-up atomic precision can enable production with unprecedented scope (in terms of product materials, components, systems, and performance), while fundamental mechanical scaling laws can enable unprecedented productivity.

Jun 11th, 2013

Nanotechnology-enabled, paper-based sensors promise to be simple, portable, disposable, low power-consuming, and inexpensive sensor devices that will find ubiquitous use in medicine, detecting explosives, toxic substances, and environmental studies. Since monitoring needs for environmental, security, and medical purposes are growing fast, the demand for sensors that are low cost, low power-consuming, high sensitivity, and selective detection is increasing as well. Paper has been recognized as a particular class of supporting matrix for accommodating sensing materials. A team of Chinese researchers has now developed low-cost gas sensors by trapping single-walled carbon nanotubes in paper and demonstrated their effectiveness by testing it on ammonia.

Nanotechnology-enabled, paper-based sensors promise to be simple, portable, disposable, low power-consuming, and inexpensive sensor devices that will find ubiquitous use in medicine, detecting explosives, toxic substances, and environmental studies. Since monitoring needs for environmental, security, and medical purposes are growing fast, the demand for sensors that are low cost, low power-consuming, high sensitivity, and selective detection is increasing as well. Paper has been recognized as a particular class of supporting matrix for accommodating sensing materials. A team of Chinese researchers has now developed low-cost gas sensors by trapping single-walled carbon nanotubes in paper and demonstrated their effectiveness by testing it on ammonia.

Jun 10th, 2013

In order to regulate nanomaterials and to determine mandatory product labelling a generally accepted agreement what the term 'nanomaterial' means has to be reached beforehand. The EU Parliament requires that a definition shallbe science-based and comprehensive. Furthermore, for regulatory measures in individual sectors, it shall be unambiguous, flexible, easy and practical to handle. During the past few years various institutions came up with suggestions for a definition, leading to a recommendation of the EU commission, which finally is being accepted into new and existing EU legislation. Some provisions in this proposal are controversial and the implementation into specific sectoral legislation constitutes a major challenge.

In order to regulate nanomaterials and to determine mandatory product labelling a generally accepted agreement what the term 'nanomaterial' means has to be reached beforehand. The EU Parliament requires that a definition shallbe science-based and comprehensive. Furthermore, for regulatory measures in individual sectors, it shall be unambiguous, flexible, easy and practical to handle. During the past few years various institutions came up with suggestions for a definition, leading to a recommendation of the EU commission, which finally is being accepted into new and existing EU legislation. Some provisions in this proposal are controversial and the implementation into specific sectoral legislation constitutes a major challenge.

Jun 6th, 2013

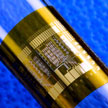

Flexible electronics are all the rage these days. They promise an entirely new design tool like for instance, tiny smartphones that wrap around our wrists, and flexible displays that fold out as newspapers or large as a television; or photovoltaic cells and reconfigurable antennas that conform to the roofs and trunks of our cars. This article reviews the progress in single-walled CNT and graphene-based flexible thin-film transistors related to material preparation, fabrication technique and transistor performance control, in order to clarify the possible scale-up methods by which mature and realistic flexible electronics could be achieved.

Flexible electronics are all the rage these days. They promise an entirely new design tool like for instance, tiny smartphones that wrap around our wrists, and flexible displays that fold out as newspapers or large as a television; or photovoltaic cells and reconfigurable antennas that conform to the roofs and trunks of our cars. This article reviews the progress in single-walled CNT and graphene-based flexible thin-film transistors related to material preparation, fabrication technique and transistor performance control, in order to clarify the possible scale-up methods by which mature and realistic flexible electronics could be achieved.

Jun 5th, 2013

The European Commission acknowledges that nanomaterials are revolutionary materials and that important challenges exist in regard to hazard and exposure assessments. Yet, they conclude that current risk-assessment methods are applicable to nanomaterials. Scientists argue that significant changes to REACH and the accompanying annexes are required to answer the call made by the public, downstream users and progressive businesses for clearer and more definite regulatory rules specific to nanomaterials.

The European Commission acknowledges that nanomaterials are revolutionary materials and that important challenges exist in regard to hazard and exposure assessments. Yet, they conclude that current risk-assessment methods are applicable to nanomaterials. Scientists argue that significant changes to REACH and the accompanying annexes are required to answer the call made by the public, downstream users and progressive businesses for clearer and more definite regulatory rules specific to nanomaterials.

May 28th, 2013

The degree of competitiveness in sports has been remarkably impacted by nanotechnology like any other innovative idea in materials science. Within the niche of sport equipments, nanotechnology offers a number of advantages and immense potential to improve sporting equipments making athletes safer, comfortble and more agile than ever. Baseball bats, tennis and badminton racquets, hockey sticks, racing bicycles, golf balls/clubs, skis, fly-fishing rods, archery arrows, etc. are some of the sporting equipments, whose performance and durability are being improved with the help of nanotechnology. Nanomaterials such as carbon nanotubes, silica nanoparticles, nanoclays fullerenes, etc. are being incorporated into various sports equipment to improve the performance of athletes as well as equipments.

The degree of competitiveness in sports has been remarkably impacted by nanotechnology like any other innovative idea in materials science. Within the niche of sport equipments, nanotechnology offers a number of advantages and immense potential to improve sporting equipments making athletes safer, comfortble and more agile than ever. Baseball bats, tennis and badminton racquets, hockey sticks, racing bicycles, golf balls/clubs, skis, fly-fishing rods, archery arrows, etc. are some of the sporting equipments, whose performance and durability are being improved with the help of nanotechnology. Nanomaterials such as carbon nanotubes, silica nanoparticles, nanoclays fullerenes, etc. are being incorporated into various sports equipment to improve the performance of athletes as well as equipments.

May 27th, 2013

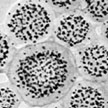

Nanomaterials hold promise as synthetic vaccines. They have the ability to deliver cargo to specific immune cells and modulate the resulting immune response. Compared to natural vaccine vectors, including engineered viruses or attenuated pathogens, synthetic nanoscale vaccines are safer, more controlled, and have the potential to be more effective. Nanoscale vaccines may also prevent, or even treat a wider range of diseases, including cancer. Researchers now have developed nanoscale polymer micelles that elicit both humoral and cellular immunity. The constructs could help in the fight against infectious diseases and cancer.

Nanomaterials hold promise as synthetic vaccines. They have the ability to deliver cargo to specific immune cells and modulate the resulting immune response. Compared to natural vaccine vectors, including engineered viruses or attenuated pathogens, synthetic nanoscale vaccines are safer, more controlled, and have the potential to be more effective. Nanoscale vaccines may also prevent, or even treat a wider range of diseases, including cancer. Researchers now have developed nanoscale polymer micelles that elicit both humoral and cellular immunity. The constructs could help in the fight against infectious diseases and cancer.

May 24th, 2013

One major challenge in contemporary science is to accomplish with synthetic building blocks what nature does so well, that is, creating complex and functional structures through multiple levels of assembly of biomolecules. Bottom-up engineering of nanostructures that assemble themselves from polymer molecules are bound to become useful tools in chemistry. To that end, researchers are using block copolymer based micellar architectures to form hierarchical superstructures with defined shape and geometry. Researchers have now demonstrate that nanoparticles tethered with block copolymers resemble micelles that can assemble into well-ordered higher level mesostructures.

One major challenge in contemporary science is to accomplish with synthetic building blocks what nature does so well, that is, creating complex and functional structures through multiple levels of assembly of biomolecules. Bottom-up engineering of nanostructures that assemble themselves from polymer molecules are bound to become useful tools in chemistry. To that end, researchers are using block copolymer based micellar architectures to form hierarchical superstructures with defined shape and geometry. Researchers have now demonstrate that nanoparticles tethered with block copolymers resemble micelles that can assemble into well-ordered higher level mesostructures.

Subscribe to our Nanotechnology Spotlight feed

Subscribe to our Nanotechnology Spotlight feed