Showing Spotlights 1913 - 1920 of 2781 in category All (newest first):

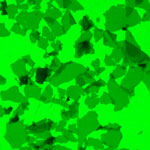

Graphene based sheets such as pristine graphene, graphene oxide, or reduced graphene oxide are basically single atomic layers of carbon network. They are the world's thinnest materials. A general visualization method that allows quick observation of these sheets would be highly desirable as it can greatly facilitate sample evaluation and manipulation, and provide immediate feedback to improve synthesis and processing strategies. Current imaging techniques for observing graphene based sheets include atomic force microscopy, transmission electron microscopy, scanning electron microscopy and optical microscopy. Some of these techniques are rather low-throughput. And all the current techniques require the use of special types of substrates. This greatly limits the capability to study these materials. Researchers from Northwestern University have now reported a new method, namely fluorescence quenching microscopy, for visualizing graphene-based sheets.

Graphene based sheets such as pristine graphene, graphene oxide, or reduced graphene oxide are basically single atomic layers of carbon network. They are the world's thinnest materials. A general visualization method that allows quick observation of these sheets would be highly desirable as it can greatly facilitate sample evaluation and manipulation, and provide immediate feedback to improve synthesis and processing strategies. Current imaging techniques for observing graphene based sheets include atomic force microscopy, transmission electron microscopy, scanning electron microscopy and optical microscopy. Some of these techniques are rather low-throughput. And all the current techniques require the use of special types of substrates. This greatly limits the capability to study these materials. Researchers from Northwestern University have now reported a new method, namely fluorescence quenching microscopy, for visualizing graphene-based sheets.

Dec 14th, 2009

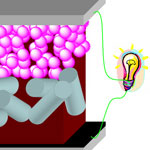

As the fields of bionanotechnologies develop, it will become possible one day to use biological nanodevices such as nanorobots for in situ and real-time in vivo diagnosis and therapeutic intervention of specific targets. A prerequisite for designing and constructing wireless biological nanorobots is the availability of an electrical source which can be made continuously available in the operational biological environment (i.e. the human body). Several possible sources - temperature displacement, kinetic energy derived from blood flow, and chemical energy released from biological motors inside the body - have been designed to provide the electrical sources that can reliably operate in body. Researchers now report the construction of a 980-nm laser-driven photovoltaic cell that can provide a sufficient power output even when covered by thick biological tissue layers.

As the fields of bionanotechnologies develop, it will become possible one day to use biological nanodevices such as nanorobots for in situ and real-time in vivo diagnosis and therapeutic intervention of specific targets. A prerequisite for designing and constructing wireless biological nanorobots is the availability of an electrical source which can be made continuously available in the operational biological environment (i.e. the human body). Several possible sources - temperature displacement, kinetic energy derived from blood flow, and chemical energy released from biological motors inside the body - have been designed to provide the electrical sources that can reliably operate in body. Researchers now report the construction of a 980-nm laser-driven photovoltaic cell that can provide a sufficient power output even when covered by thick biological tissue layers.

Dec 11th, 2009

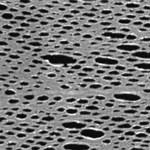

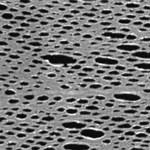

Ultrathin nanosieves with a thickness smaller than the size of the pores are especially advantageous for applications in materials separation since they result in an increase of flow across the nanosieve. Separation of complex biological fluids can particularly benefit from novel, chemically functionalized nanosieves, since many bioanalytical problems in proteomics or medical diagnostics cannot be solved with conventional separation technologies. Researchers in Germany have now fabricated chemically functionalized nanosieves with a thickness of only 1 nm - the thinnest free-standing nanosieve membranes that have been reported so far. The size of the nanoholes in the membranes can be flexibly adjusted down to 30 nm by choosing appropriate conditions for lithography.

Ultrathin nanosieves with a thickness smaller than the size of the pores are especially advantageous for applications in materials separation since they result in an increase of flow across the nanosieve. Separation of complex biological fluids can particularly benefit from novel, chemically functionalized nanosieves, since many bioanalytical problems in proteomics or medical diagnostics cannot be solved with conventional separation technologies. Researchers in Germany have now fabricated chemically functionalized nanosieves with a thickness of only 1 nm - the thinnest free-standing nanosieve membranes that have been reported so far. The size of the nanoholes in the membranes can be flexibly adjusted down to 30 nm by choosing appropriate conditions for lithography.

Dec 9th, 2009

Safe drinking water has been and increasingly will be a pressing issue for communities around the world. In developed countries it is about keeping water supplies safe while in the rest of the world it is about making it safe. The potential impact areas for nanotechnology in water applications are divided into three categories - treatment and remediation, sensing and detection, and pollution prevention. Within the category of sensing and detection, of particular interest is the development of new and enhanced sensors to detect biological and chemical contaminants at very low concentration levels. Testing of water against a spectrum of pathogens can potentially reduce the likelihood of many diseases from cancer to viral infections.

Safe drinking water has been and increasingly will be a pressing issue for communities around the world. In developed countries it is about keeping water supplies safe while in the rest of the world it is about making it safe. The potential impact areas for nanotechnology in water applications are divided into three categories - treatment and remediation, sensing and detection, and pollution prevention. Within the category of sensing and detection, of particular interest is the development of new and enhanced sensors to detect biological and chemical contaminants at very low concentration levels. Testing of water against a spectrum of pathogens can potentially reduce the likelihood of many diseases from cancer to viral infections.

Dec 8th, 2009

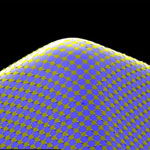

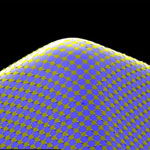

The key for most visionary electronic applications will be printability, i.e. that the circuits can be applied to any material, and flexibility, i.e. that they can adhere to any shape or form - even body parts. Imagine an ultrathin film of electronic circuits attached to internal organs like your heart to monitor vital functions. All existing forms of electronics are built on the two-dimensional, planar surfaces of either semiconductor wafers or plates of glass. Mechanically flexible circuits based on organic semiconductors are beginning to emerge into commercial applications, but they can only be wrapped onto the surfaces of cones or cylinders - they cannot conform to spheres or any other type of surface that exhibits non-Gaussian curvature. Applications that demand conformal integration, e.g. structural or personal health monitors, advanced surgical devices, or systems that use ergonomic or bio-inspired layouts, etc., require circuit technologies in curvilinear layouts.

The key for most visionary electronic applications will be printability, i.e. that the circuits can be applied to any material, and flexibility, i.e. that they can adhere to any shape or form - even body parts. Imagine an ultrathin film of electronic circuits attached to internal organs like your heart to monitor vital functions. All existing forms of electronics are built on the two-dimensional, planar surfaces of either semiconductor wafers or plates of glass. Mechanically flexible circuits based on organic semiconductors are beginning to emerge into commercial applications, but they can only be wrapped onto the surfaces of cones or cylinders - they cannot conform to spheres or any other type of surface that exhibits non-Gaussian curvature. Applications that demand conformal integration, e.g. structural or personal health monitors, advanced surgical devices, or systems that use ergonomic or bio-inspired layouts, etc., require circuit technologies in curvilinear layouts.

Dec 7th, 2009

Nanotechnology catalytical techniques are having a profound impact on clean energy research and development, ranging from hydrogen and liquid fuel production to clean combustion technologies. In this area, catalyst stability is paramount for technical application, and remains a major challenge, even for many conventional catalysts. Thermal stability in particular is a challenge across many currently discussed technical applications and an obstacle for many nanocatalyst-enabled devices, from sensors to fuel production. In particular fuel processing technologies (hydrogen and/or liquid fuel production from fossil and renewable resources, clean combustion) typically proceed at particularly severe conditions (high temperatures, high through-put, contaminated fuel streams, etc) and hence require particular attention to catalyst stabilization, but even many processes at much lower temperature conditions, such as fuel cells, are still looking for catalysts with improved stability.

Nanotechnology catalytical techniques are having a profound impact on clean energy research and development, ranging from hydrogen and liquid fuel production to clean combustion technologies. In this area, catalyst stability is paramount for technical application, and remains a major challenge, even for many conventional catalysts. Thermal stability in particular is a challenge across many currently discussed technical applications and an obstacle for many nanocatalyst-enabled devices, from sensors to fuel production. In particular fuel processing technologies (hydrogen and/or liquid fuel production from fossil and renewable resources, clean combustion) typically proceed at particularly severe conditions (high temperatures, high through-put, contaminated fuel streams, etc) and hence require particular attention to catalyst stabilization, but even many processes at much lower temperature conditions, such as fuel cells, are still looking for catalysts with improved stability.

Dec 4th, 2009

As a reader of Nanowerk we would like to invite you to joins us at the MicroNanoTec trade show at HANNOVER MESSE 2010 completely free of charge. HANNOVER MESSE will embrace microsystems technology and nanotechnology in a single trade fair under the new name MicroNanoTec. Microsystems technology and nanotechnologies have formed an important part of HANNOVER MESSE for many years. In the past they have mainly been presented at the leading trade fair MicroTechnology. The change of name signals a further expansion of microtechnology and nanotechnology at HANNOVER MESSE. The world's leading showcase for industrial technology is staged annually in Hannover, Germany. The next HANNOVER MESSE will be held from 19 to 23 April 2010.

As a reader of Nanowerk we would like to invite you to joins us at the MicroNanoTec trade show at HANNOVER MESSE 2010 completely free of charge. HANNOVER MESSE will embrace microsystems technology and nanotechnology in a single trade fair under the new name MicroNanoTec. Microsystems technology and nanotechnologies have formed an important part of HANNOVER MESSE for many years. In the past they have mainly been presented at the leading trade fair MicroTechnology. The change of name signals a further expansion of microtechnology and nanotechnology at HANNOVER MESSE. The world's leading showcase for industrial technology is staged annually in Hannover, Germany. The next HANNOVER MESSE will be held from 19 to 23 April 2010.

Dec 3rd, 2009

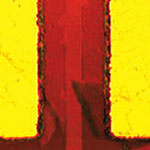

Chip structures already have reached nanoscale dimensions but as they continue to shrink below the 20 nanometer mark, ever more complex challenges arise and scaling appears not to be economically feasible any more. And below 10 nm, the fundamental physical limits of CMOS technology will be reached.

One promising material that could enable the chip industry to move beyond the current CMOS technology is graphene, a monolayer sheet of carbon. Notwithstanding the intense research interest, large scale production of single layer graphene remains a significant challenge. Researchers at Cornell University have now reported a new technique for producing large scale single layer graphene sheets and fabricating transistor arrays with uniform electrical properties directly on the device substrate.

Chip structures already have reached nanoscale dimensions but as they continue to shrink below the 20 nanometer mark, ever more complex challenges arise and scaling appears not to be economically feasible any more. And below 10 nm, the fundamental physical limits of CMOS technology will be reached.

One promising material that could enable the chip industry to move beyond the current CMOS technology is graphene, a monolayer sheet of carbon. Notwithstanding the intense research interest, large scale production of single layer graphene remains a significant challenge. Researchers at Cornell University have now reported a new technique for producing large scale single layer graphene sheets and fabricating transistor arrays with uniform electrical properties directly on the device substrate.

Dec 2nd, 2009

Graphene based sheets such as pristine graphene, graphene oxide, or reduced graphene oxide are basically single atomic layers of carbon network. They are the world's thinnest materials. A general visualization method that allows quick observation of these sheets would be highly desirable as it can greatly facilitate sample evaluation and manipulation, and provide immediate feedback to improve synthesis and processing strategies. Current imaging techniques for observing graphene based sheets include atomic force microscopy, transmission electron microscopy, scanning electron microscopy and optical microscopy. Some of these techniques are rather low-throughput. And all the current techniques require the use of special types of substrates. This greatly limits the capability to study these materials. Researchers from Northwestern University have now reported a new method, namely fluorescence quenching microscopy, for visualizing graphene-based sheets.

Graphene based sheets such as pristine graphene, graphene oxide, or reduced graphene oxide are basically single atomic layers of carbon network. They are the world's thinnest materials. A general visualization method that allows quick observation of these sheets would be highly desirable as it can greatly facilitate sample evaluation and manipulation, and provide immediate feedback to improve synthesis and processing strategies. Current imaging techniques for observing graphene based sheets include atomic force microscopy, transmission electron microscopy, scanning electron microscopy and optical microscopy. Some of these techniques are rather low-throughput. And all the current techniques require the use of special types of substrates. This greatly limits the capability to study these materials. Researchers from Northwestern University have now reported a new method, namely fluorescence quenching microscopy, for visualizing graphene-based sheets.

Subscribe to our Nanotechnology Spotlight feed

Subscribe to our Nanotechnology Spotlight feed