Showing Spotlights 1561 - 1568 of 2787 in category All (newest first):

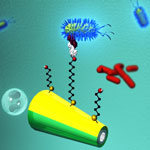

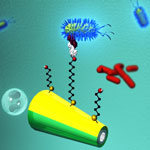

Early detection of food borne pathogenic bacteria is critical to prevent disease outbreaks and preserve public health. This has led to urgent demands to develop highly efficient strategies for isolating and detecting this microorganism in connection to food safety, medical diagnostics, water quality, and counter-terrorism. Conventional techniques to detect E. coli and other pathogenic bacteria are time-consuming, labor-intensive, and inadequate as they lack the ability to detect bacteria in real time. Thus, there is an urgent need for alternative platforms for the rapid, sensitive, reliable and simple isolation and detection pathogens. Taking a novel approach to isolating pathogenic bacteria from complex clinical, environmental and food samples, researchers have developed a nanomotor strategy that involves the movement of lectin-functionalized microengines. Receptor-functionalized nanoswimmers offer direct and rapid target isolation from raw biological samples without preparatory and washing steps.

Early detection of food borne pathogenic bacteria is critical to prevent disease outbreaks and preserve public health. This has led to urgent demands to develop highly efficient strategies for isolating and detecting this microorganism in connection to food safety, medical diagnostics, water quality, and counter-terrorism. Conventional techniques to detect E. coli and other pathogenic bacteria are time-consuming, labor-intensive, and inadequate as they lack the ability to detect bacteria in real time. Thus, there is an urgent need for alternative platforms for the rapid, sensitive, reliable and simple isolation and detection pathogens. Taking a novel approach to isolating pathogenic bacteria from complex clinical, environmental and food samples, researchers have developed a nanomotor strategy that involves the movement of lectin-functionalized microengines. Receptor-functionalized nanoswimmers offer direct and rapid target isolation from raw biological samples without preparatory and washing steps.

Dec 8th, 2011

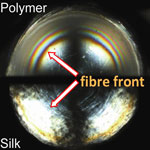

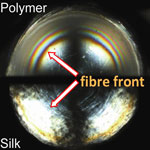

Researchers have, for the first time, compared the energetic cost of silk and synthetic polymer fiber formation and demonstrated that, if we can learn how to spin like the spider, we should be able to cut the energy costs for polymer fiber processing by 90%, leaving alone the heat treatment requirements. The two routes of polymer fiber-spinning - one developed by nature and the other developed by man - show striking similarities: both start with liquid feed-stocks sharing comparable flow properties; in both cases the 'melts' are extruded through convergent dye designs; and for both 'spinning' results in highly ordered semicrystalline fibrous structures. In other words, analogous to the industrial melt spinning of a synthetic polymer, in the natural spinning of a silk the molecules (proteins) align (refold), nucleate (denature) and crystallize (aggregate).

Researchers have, for the first time, compared the energetic cost of silk and synthetic polymer fiber formation and demonstrated that, if we can learn how to spin like the spider, we should be able to cut the energy costs for polymer fiber processing by 90%, leaving alone the heat treatment requirements. The two routes of polymer fiber-spinning - one developed by nature and the other developed by man - show striking similarities: both start with liquid feed-stocks sharing comparable flow properties; in both cases the 'melts' are extruded through convergent dye designs; and for both 'spinning' results in highly ordered semicrystalline fibrous structures. In other words, analogous to the industrial melt spinning of a synthetic polymer, in the natural spinning of a silk the molecules (proteins) align (refold), nucleate (denature) and crystallize (aggregate).

Dec 7th, 2011

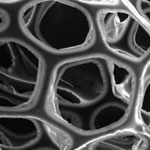

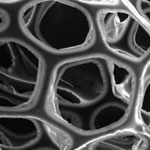

Ultra- or supercapacitors are emerging as a key enabling storage technology for use in fuel-efficient transport as well as in renewable energy. These devices combine the advantages of conventional capacitors - they can rapidly deliver high current densities on demand - and batteries - they can store a large amount of electrical energy. Supercapacitors offer a low-cost alternative source of energy to replace rechargeable batteries. Although the energy density of capacitors is quite low compared to batteries, their power density is much higher, allowing them to provide bursts of electric energy. Researchers have now fabricated novel high-performance sponge supercapacitors using a simple and scalable method. Their results shows that three-dimensional electrodes potentially have a huge advantage over conventional mixed electrode materials.

Ultra- or supercapacitors are emerging as a key enabling storage technology for use in fuel-efficient transport as well as in renewable energy. These devices combine the advantages of conventional capacitors - they can rapidly deliver high current densities on demand - and batteries - they can store a large amount of electrical energy. Supercapacitors offer a low-cost alternative source of energy to replace rechargeable batteries. Although the energy density of capacitors is quite low compared to batteries, their power density is much higher, allowing them to provide bursts of electric energy. Researchers have now fabricated novel high-performance sponge supercapacitors using a simple and scalable method. Their results shows that three-dimensional electrodes potentially have a huge advantage over conventional mixed electrode materials.

Dec 6th, 2011

Directed self-assembly of block copolymers is a candidate lithography for use in future nanoelectronics and patterned media copolymer with resolutions down to the sub-10nm domain. Variations of this effective nanofabrication technique have been used to write periodic arrays of nanoscale features into substrates at exceptionally high densities with resolutions that are difficult or impossible to achieve with top-down techniques alone. However, in many cases these approaches are either too costly or too complex due to the required number of processing steps, for instance expensive, time-consuming substrate pre-patterning. Researchers at the Molecular Foundry have now shown that block copolymers can be aligned on an unpatterned substrate using a removable and reusable mold applied from above.

Directed self-assembly of block copolymers is a candidate lithography for use in future nanoelectronics and patterned media copolymer with resolutions down to the sub-10nm domain. Variations of this effective nanofabrication technique have been used to write periodic arrays of nanoscale features into substrates at exceptionally high densities with resolutions that are difficult or impossible to achieve with top-down techniques alone. However, in many cases these approaches are either too costly or too complex due to the required number of processing steps, for instance expensive, time-consuming substrate pre-patterning. Researchers at the Molecular Foundry have now shown that block copolymers can be aligned on an unpatterned substrate using a removable and reusable mold applied from above.

Dec 5th, 2011

Many batteries still contain heavy metals such as mercury, lead, cadmium, and nickel, which can contaminate the environment and pose a potential threat to human health when batteries are improperly disposed of. Not only do the billions upon billions of batteries in landfills pose an environmental problem, they also are a complete waste of a potential and cheap raw material. Unfortunately, current recycling methods for many battery types, especially the small consumer type ones, don't make sense from an economical point of view since the recycling costs exceed the recoverable metals value. Researchers in India have carried out research to address the recycling of consumer-type batteries. They report the recovery of pure zinc oxide nanoparticles from spent Zn-Mn dry alkaline batteries.

Many batteries still contain heavy metals such as mercury, lead, cadmium, and nickel, which can contaminate the environment and pose a potential threat to human health when batteries are improperly disposed of. Not only do the billions upon billions of batteries in landfills pose an environmental problem, they also are a complete waste of a potential and cheap raw material. Unfortunately, current recycling methods for many battery types, especially the small consumer type ones, don't make sense from an economical point of view since the recycling costs exceed the recoverable metals value. Researchers in India have carried out research to address the recycling of consumer-type batteries. They report the recovery of pure zinc oxide nanoparticles from spent Zn-Mn dry alkaline batteries.

Dec 1st, 2011

Surface-enhanced Raman spectroscopy (SERS) is a powerful research tool that is being used to detect and analyze chemicals as well as a non-invasive tool for imaging cells and detecting cancer. It also has been employed for label-free sensing of bacteria, exploiting its tremendous enhancement in the Raman signal. SERS can provide the vibrational spectrum of the molecules on the cell wall of a single bacterium in a few seconds. Such a spectrum is like the fingerprints of the molecules and therefore could be exploited as a means to quickly identify bacteria without the need of a time-consuming bacteria culture process, which typically takes a few days to several weeks depending on the species of bacteria. To practically apply SERS to the early diagnosis of bacteremia - the presence of bacteria in the blood - researchers have managed to capture bacteria in a patient's blood onto the SERS substrate.

Surface-enhanced Raman spectroscopy (SERS) is a powerful research tool that is being used to detect and analyze chemicals as well as a non-invasive tool for imaging cells and detecting cancer. It also has been employed for label-free sensing of bacteria, exploiting its tremendous enhancement in the Raman signal. SERS can provide the vibrational spectrum of the molecules on the cell wall of a single bacterium in a few seconds. Such a spectrum is like the fingerprints of the molecules and therefore could be exploited as a means to quickly identify bacteria without the need of a time-consuming bacteria culture process, which typically takes a few days to several weeks depending on the species of bacteria. To practically apply SERS to the early diagnosis of bacteremia - the presence of bacteria in the blood - researchers have managed to capture bacteria in a patient's blood onto the SERS substrate.

Nov 29th, 2011

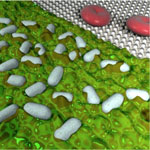

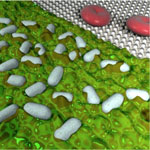

Graphene research papers are popping up left and right at what seems like an accelerating speed and growing volume. One of the areas that is seeing vast research interest is the biological interfacing of graphene for instance for sensor applications. Today, we are looking at another exciting graphene bio application where a graphene sensor is integrated with microfluidics to sense malaria-infected red blood cells at the single-cell level. Specific binding between ligands on positively charged knobs of infected red blood cells and receptors functionalized on graphene inside microfluidic channels induces a distinct conductance change. Conductance returns to baseline value when infected cell exits the graphene channel.

Graphene research papers are popping up left and right at what seems like an accelerating speed and growing volume. One of the areas that is seeing vast research interest is the biological interfacing of graphene for instance for sensor applications. Today, we are looking at another exciting graphene bio application where a graphene sensor is integrated with microfluidics to sense malaria-infected red blood cells at the single-cell level. Specific binding between ligands on positively charged knobs of infected red blood cells and receptors functionalized on graphene inside microfluidic channels induces a distinct conductance change. Conductance returns to baseline value when infected cell exits the graphene channel.

Nov 28th, 2011

Naturally occurring nanomaterials can be found everywhere in nature and only with recent advances in instrumentation and metrology equipment are researchers beginning to locate, isolate, characterize and classify the vast range of their structural and chemical varieties. Scientists are beginning to recognize that all sources of nanomaterials are important in evaluating the possible impact of nanoscale materials on human health and the environment; however, perhaps the greatest benefit to studying these materials will be in their ability to inform researchers about the manner in which nano-sized materials have been a part of our environment from the beginning.

Naturally occurring nanomaterials can be found everywhere in nature and only with recent advances in instrumentation and metrology equipment are researchers beginning to locate, isolate, characterize and classify the vast range of their structural and chemical varieties. Scientists are beginning to recognize that all sources of nanomaterials are important in evaluating the possible impact of nanoscale materials on human health and the environment; however, perhaps the greatest benefit to studying these materials will be in their ability to inform researchers about the manner in which nano-sized materials have been a part of our environment from the beginning.

Nov 24th, 2011

Early detection of food borne pathogenic bacteria is critical to prevent disease outbreaks and preserve public health. This has led to urgent demands to develop highly efficient strategies for isolating and detecting this microorganism in connection to food safety, medical diagnostics, water quality, and counter-terrorism. Conventional techniques to detect E. coli and other pathogenic bacteria are time-consuming, labor-intensive, and inadequate as they lack the ability to detect bacteria in real time. Thus, there is an urgent need for alternative platforms for the rapid, sensitive, reliable and simple isolation and detection pathogens. Taking a novel approach to isolating pathogenic bacteria from complex clinical, environmental and food samples, researchers have developed a nanomotor strategy that involves the movement of lectin-functionalized microengines. Receptor-functionalized nanoswimmers offer direct and rapid target isolation from raw biological samples without preparatory and washing steps.

Early detection of food borne pathogenic bacteria is critical to prevent disease outbreaks and preserve public health. This has led to urgent demands to develop highly efficient strategies for isolating and detecting this microorganism in connection to food safety, medical diagnostics, water quality, and counter-terrorism. Conventional techniques to detect E. coli and other pathogenic bacteria are time-consuming, labor-intensive, and inadequate as they lack the ability to detect bacteria in real time. Thus, there is an urgent need for alternative platforms for the rapid, sensitive, reliable and simple isolation and detection pathogens. Taking a novel approach to isolating pathogenic bacteria from complex clinical, environmental and food samples, researchers have developed a nanomotor strategy that involves the movement of lectin-functionalized microengines. Receptor-functionalized nanoswimmers offer direct and rapid target isolation from raw biological samples without preparatory and washing steps.

Subscribe to our Nanotechnology Spotlight feed

Subscribe to our Nanotechnology Spotlight feed