Showing Spotlights 257 - 264 of 546 in category All (newest first):

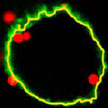

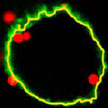

Understanding the purpose of the molecular modifiers that annotate DNA strands - called epigenetic markers - and how they change over time will be crucial in understanding biological processes ranging from embryo development to aging and disease. But just how the markers work, and what different markers mean, is painstaking work that still has left a long way to go. Advancing this research field, scientists have now reported the first direct visualization of individual epigenetic modifications in the genome. This is a technical and conceptual breakthrough as it allows not only to quantify the amount of modified bases but also to pin point and map their position in the genome.

Understanding the purpose of the molecular modifiers that annotate DNA strands - called epigenetic markers - and how they change over time will be crucial in understanding biological processes ranging from embryo development to aging and disease. But just how the markers work, and what different markers mean, is painstaking work that still has left a long way to go. Advancing this research field, scientists have now reported the first direct visualization of individual epigenetic modifications in the genome. This is a technical and conceptual breakthrough as it allows not only to quantify the amount of modified bases but also to pin point and map their position in the genome.

Jul 24th, 2013

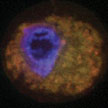

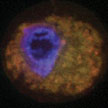

When nanoparticles enter the human body, for instance as part of a nanomedicine application, they come into immediate contact with a collection of biomolecules, such as proteins, that are characteristic of that environment, e.g. blood. A protein may become associated with the nanomaterial surface during a protein-nanomaterial interaction, in a process called adsorption. The layers of proteins adsorbed to the surface of a nanomaterial at any given time is known as the protein corona. The type and amount of proteins in the corona composition is strongly dependent on several factors, including physicochemical properties of nanoparticles; protein source; and protein concentration - and temperature.

When nanoparticles enter the human body, for instance as part of a nanomedicine application, they come into immediate contact with a collection of biomolecules, such as proteins, that are characteristic of that environment, e.g. blood. A protein may become associated with the nanomaterial surface during a protein-nanomaterial interaction, in a process called adsorption. The layers of proteins adsorbed to the surface of a nanomaterial at any given time is known as the protein corona. The type and amount of proteins in the corona composition is strongly dependent on several factors, including physicochemical properties of nanoparticles; protein source; and protein concentration - and temperature.

Jul 23rd, 2013

The study of individual cells is of great importance in biomedicine. Many biological processes incur inside cells and these processes can differ from cell to cell. The development of micro- and nanoscale tools smaller than cells will help in understanding the cellular machinery at the single cell level. All kinds of mechanical, biochemical, electrochemical and thermal processes could be studied using these devices. Researchers have now demonstrated a nanomechanical chip that can be internalized to detect intracellular pressure changes within living cells, enabling an interrogation method based on confocal laser scanning microscopy.

The study of individual cells is of great importance in biomedicine. Many biological processes incur inside cells and these processes can differ from cell to cell. The development of micro- and nanoscale tools smaller than cells will help in understanding the cellular machinery at the single cell level. All kinds of mechanical, biochemical, electrochemical and thermal processes could be studied using these devices. Researchers have now demonstrated a nanomechanical chip that can be internalized to detect intracellular pressure changes within living cells, enabling an interrogation method based on confocal laser scanning microscopy.

Jul 18th, 2013

Despite significant advances in the medical/surgical management of severe thermal injury, wound infection and subsequent sepsis persist as frequent causes of morbidity and mortality for burn victims not only due to the extensive compromise of the protective barrier against microbial invasion, but also as a result of growing pathogen resistance to our therapeutic options. Researchers have now demonstrated that encapsulating Amphotericin B, a intravenously administered potent fungicidal polyene macrolide, in nanoparticles increased its killing impact against numerous candida species, was more effective at preventing candidal biofilm formation, and cleared a mouse burn model infected with candida more effectively than solubilized amphotericin.

Despite significant advances in the medical/surgical management of severe thermal injury, wound infection and subsequent sepsis persist as frequent causes of morbidity and mortality for burn victims not only due to the extensive compromise of the protective barrier against microbial invasion, but also as a result of growing pathogen resistance to our therapeutic options. Researchers have now demonstrated that encapsulating Amphotericin B, a intravenously administered potent fungicidal polyene macrolide, in nanoparticles increased its killing impact against numerous candida species, was more effective at preventing candidal biofilm formation, and cleared a mouse burn model infected with candida more effectively than solubilized amphotericin.

Jun 21st, 2013

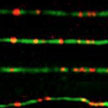

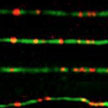

While nanoparticles are emerging as drug carriers for targeted nanomedicines, preclinical assays to test nanoparticle efficacy are hampered by the lack of methods to quantitatively determine internalized particles. A novel method is suited to pave the way for preclinical testing of nanoparticles to establish dose-efficacy relationships and to optimize biophysical and biochemical parameters in order to make better drug delivery vehicles. The team demonstrated that it is possible to determine the exact number of nanoparticles inside a cell through a combination of three methods and a mathematical model which they developed to link the data from these three methods.

While nanoparticles are emerging as drug carriers for targeted nanomedicines, preclinical assays to test nanoparticle efficacy are hampered by the lack of methods to quantitatively determine internalized particles. A novel method is suited to pave the way for preclinical testing of nanoparticles to establish dose-efficacy relationships and to optimize biophysical and biochemical parameters in order to make better drug delivery vehicles. The team demonstrated that it is possible to determine the exact number of nanoparticles inside a cell through a combination of three methods and a mathematical model which they developed to link the data from these three methods.

Jun 18th, 2013

Under an applied magnetic field, iron oxide nanoparticles trigger cancer cell death by bursting intracellular organelles. These findings offer a new strategy to treat cancer using nanomaterials. Researchers may be able to administer magnetic nanoparticles externally, allow them to accumulate at the tumor site, and then irradiate them with a magnetic field to induce cancer cell death. In the past, antibodies and small molecules were used to trigger apoptosis in cancer cells. However, cancer cells often adapt to resist these treatments. Because iron oxide nanoparticles cause physical damage to cancer cells, it is difficult for them to develop resistance.

Under an applied magnetic field, iron oxide nanoparticles trigger cancer cell death by bursting intracellular organelles. These findings offer a new strategy to treat cancer using nanomaterials. Researchers may be able to administer magnetic nanoparticles externally, allow them to accumulate at the tumor site, and then irradiate them with a magnetic field to induce cancer cell death. In the past, antibodies and small molecules were used to trigger apoptosis in cancer cells. However, cancer cells often adapt to resist these treatments. Because iron oxide nanoparticles cause physical damage to cancer cells, it is difficult for them to develop resistance.

Jun 14th, 2013

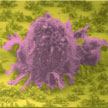

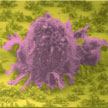

Fractals are structures built up from repeated sizings of a simple shape to make a complex one. A fractal is a geometric structure that can repeat itself towards infinity. Zooming in on a fragment of it, the original structure becomes visible again. In biological systems, fractal structures can be found everywhere - bronchial trees, vasculature, and nerve cells. These amazing structures can provide a specific interfacial contact mode that is highly efficient for absorbing sunlight, transporting nutrition, exchanging oxygen and carbon dioxide, and signal transduction. Researchers have now demonstrated the fabrication of programmable fractal gold nanostructured interfaces and their outstanding specific recognition of rare cancer cells from whole blood samples along with their effective release capability.

Fractals are structures built up from repeated sizings of a simple shape to make a complex one. A fractal is a geometric structure that can repeat itself towards infinity. Zooming in on a fragment of it, the original structure becomes visible again. In biological systems, fractal structures can be found everywhere - bronchial trees, vasculature, and nerve cells. These amazing structures can provide a specific interfacial contact mode that is highly efficient for absorbing sunlight, transporting nutrition, exchanging oxygen and carbon dioxide, and signal transduction. Researchers have now demonstrated the fabrication of programmable fractal gold nanostructured interfaces and their outstanding specific recognition of rare cancer cells from whole blood samples along with their effective release capability.

Jun 13th, 2013

Nanomaterials hold promise as synthetic vaccines. They have the ability to deliver cargo to specific immune cells and modulate the resulting immune response. Compared to natural vaccine vectors, including engineered viruses or attenuated pathogens, synthetic nanoscale vaccines are safer, more controlled, and have the potential to be more effective. Nanoscale vaccines may also prevent, or even treat a wider range of diseases, including cancer. Researchers now have developed nanoscale polymer micelles that elicit both humoral and cellular immunity. The constructs could help in the fight against infectious diseases and cancer.

Nanomaterials hold promise as synthetic vaccines. They have the ability to deliver cargo to specific immune cells and modulate the resulting immune response. Compared to natural vaccine vectors, including engineered viruses or attenuated pathogens, synthetic nanoscale vaccines are safer, more controlled, and have the potential to be more effective. Nanoscale vaccines may also prevent, or even treat a wider range of diseases, including cancer. Researchers now have developed nanoscale polymer micelles that elicit both humoral and cellular immunity. The constructs could help in the fight against infectious diseases and cancer.

May 24th, 2013

Understanding the purpose of the molecular modifiers that annotate DNA strands - called epigenetic markers - and how they change over time will be crucial in understanding biological processes ranging from embryo development to aging and disease. But just how the markers work, and what different markers mean, is painstaking work that still has left a long way to go. Advancing this research field, scientists have now reported the first direct visualization of individual epigenetic modifications in the genome. This is a technical and conceptual breakthrough as it allows not only to quantify the amount of modified bases but also to pin point and map their position in the genome.

Understanding the purpose of the molecular modifiers that annotate DNA strands - called epigenetic markers - and how they change over time will be crucial in understanding biological processes ranging from embryo development to aging and disease. But just how the markers work, and what different markers mean, is painstaking work that still has left a long way to go. Advancing this research field, scientists have now reported the first direct visualization of individual epigenetic modifications in the genome. This is a technical and conceptual breakthrough as it allows not only to quantify the amount of modified bases but also to pin point and map their position in the genome.

Subscribe to our Nanotechnology Spotlight feed

Subscribe to our Nanotechnology Spotlight feed